TL;DR:

- Early validation prevents building products no one wants, reducing startup failure risk.

- Validating assumptions through rapid, inexpensive tests is crucial before full development.

- Continuous post-launch validation informs growth and prevents scaling failures.

Why early validation saves startups: PMF in 2026

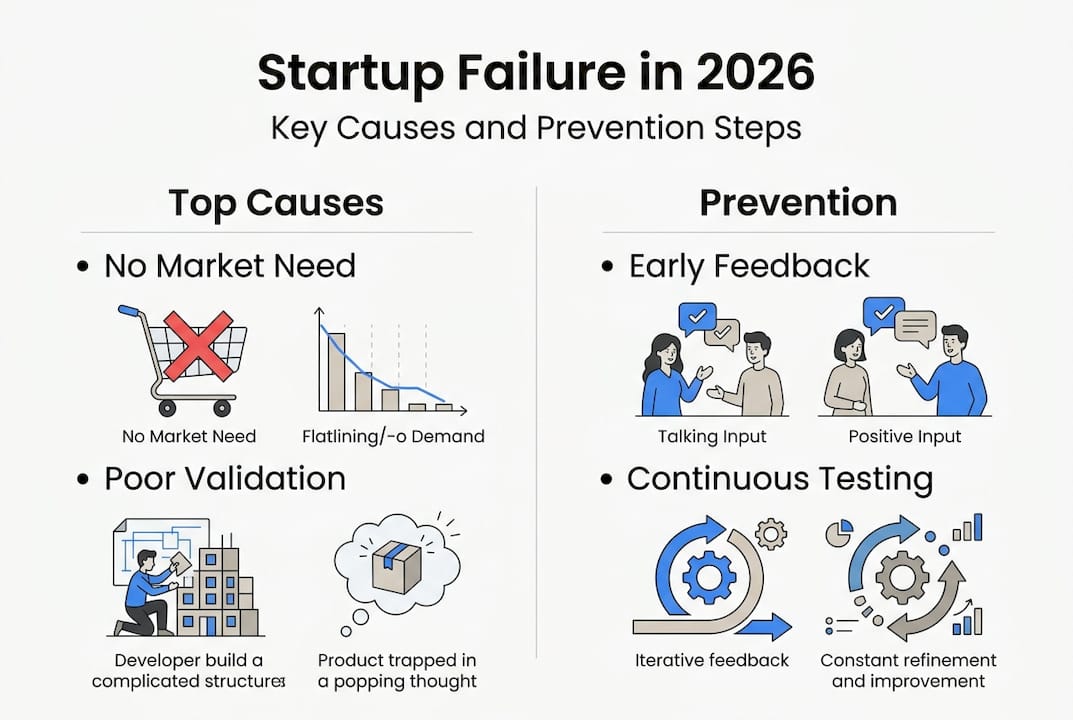

Nearly 42% of startups fail because they build something nobody wants. Not because they ran out of money, not because the team fell apart, but because they skipped the hard conversation at the beginning: does anyone actually need this? Early validation is the discipline that separates founders who ship smart from those who burn through their runway building the wrong product. This article walks you through why validation is non-negotiable, the frameworks that make it repeatable, how to prioritize what to test first, and why validation needs to continue long after your first users sign up.

Table of Contents

- The cost of late validation: Why startups fail

- The Lean Startup: Build-Measure-Learn as a repeatable validation loop

- The riskiest assumptions: What and how to validate first

- Validation never stops: Post-MVP risks and continuous learning

- What most guides miss about early validation: Lessons from repeated startup cycles

- How we help founders validate early: Your next step

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Avoid major startup failures | Early validation prevents nearly half of SaaS startups from failing due to poor product-market fit. |

| Use Lean Startup frameworks | The Build-Measure-Learn loop helps founders test assumptions quickly, reducing wasted time and resources. |

| Prioritize riskiest tests | Focus on the riskiest assumptions and validate them with real customer input before building your MVP. |

| Validation is ongoing | Continuous testing after MVP launch protects your business from late-stage failures. |

| Look for strong market signals | True validation is about finding strong customer demand, not just completing tasks or running experiments. |

The cost of late validation: Why startups fail

Let’s not bury the lead. 43% of VC-backed startup failures since 2023 trace back to poor product-market fit, and 70% of those companies ran out of money as a direct result. Running out of capital isn’t the root cause. It’s the symptom. The root cause is spending months or years building a product that the market was never ready to pay for.

Founders who skip early validation fall into what’s sometimes called the “vision trap.” You’re so convinced your idea is right that you rationalize every piece of evidence to the contrary. A user says the pricing is too high, and you tell yourself they just don’t understand the value yet. A potential customer says they wouldn’t switch from their current tool, and you decide they’re not your real target anyway. The vision trap is seductive because passion is genuinely important in startups. But passion without evidence is just expensive guessing.

The contrast between validated and non-validated startups is stark when you look at their outcomes side by side.

| Factor | Validated startup | Non-validated startup |

|---|---|---|

| Time to first paying customer | Weeks | Often never |

| Capital efficiency | High | Low |

| Pivot rate | Informed and intentional | Reactive and desperate |

| Runway used before PMF signal | Minimal | Often exhausted |

| Team morale at 12 months | Focused | Chaotic |

The common pitfalls from skipping validation include:

- Building in stealth for 12 months before showing anyone the product

- Assuming competitors validate your market without checking willingness to pay

- Talking only to friendly contacts who won’t give honest feedback

- Mistaking feature requests for demand signals instead of real purchase intent

- Waiting until the product is “ready” before testing with real users

Understanding why validation matters at the earliest stage isn’t just theoretical. Poor product-market alignment creates downstream problems that compound fast: wasted engineering hours, misaligned marketing spend, and a team chasing metrics that don’t connect to real customer behavior.

“The biggest waste in product development isn’t bad code. It’s building the right code for the wrong problem.”

That insight should live on every founder’s monitor. Before you worry about tech stack, architecture, or feature sets, your first job is confirming that the problem you’re solving is real, urgent, and something people will pay to fix.

The Lean Startup: Build-Measure-Learn as a repeatable validation loop

The Build-Measure-Learn loop from Eric Ries’s Lean Startup methodology is one of the most durable frameworks in early-stage product development. It’s not a metaphor. It’s a literal operational process. The Build-Measure-Learn loop tests your riskiest assumptions through the smallest possible experiments before you commit to a full build. Landing pages, customer interviews, paper mockups, and smoke tests all qualify as “build” phases at the earliest stage.

Here’s how the loop works in practice for an early-stage SaaS founder:

- Identify your riskiest assumption. What one thing, if proven wrong, would kill your business? Start there. Not with your favorite feature, not with the UI.

- Design the smallest test. A landing page with a “get early access” email capture costs almost nothing. A structured 30-minute customer interview costs only your time. These are your cheapest experiments.

- Set a clear success metric before you run the test. “We’ll consider this validated if 15% of visitors sign up” is useful. “Let’s see what happens” is not.

- Run the experiment with real people. Not friends, not family. Strangers from your target market who have no social obligation to be nice to you.

- Measure honestly. The data doesn’t care about your feelings. If the numbers don’t hit your threshold, you learned something important.

- Learn and decide. Pivot the assumption, adjust the approach, or kill the idea. Then start the loop again.

A practical example: a founder building a B2B invoicing SaaS for freelance designers could skip months of development by running a landing page test first. Set up a simple page describing the core value prop, drive 200 targeted visitors using a small LinkedIn ad budget, and measure email signups. If you get a 20% conversion rate, you have a strong signal. If you get 1%, you need to rethink the messaging or the market before writing a single line of code.

Here’s a breakdown of where validation data comes from at different stages:

| Validation source | Stage | What it tells you |

|---|---|---|

| Customer interviews | Pre-build | Problem urgency and frequency |

| Landing page signups | Pre-build | Demand signal and messaging fit |

| Prototype feedback | Early build | Usability and feature priority |

| Activation rate | Post-launch | Whether users get value quickly |

| Retention at 30 days | Post-launch | True product-market fit signal |

Following Lean Startup best practices means treating every assumption as a hypothesis, not a fact. A lean product creation guide can help you systematize this process so you’re not reinventing the loop every sprint. For deeper context on execution at the development level, a startup software development guide covers how smart teams translate validated assumptions into actual build priorities.

Pro Tip: Always start with the cheapest, fastest test. An email list, a Typeform survey, and five honest conversations will tell you more than three months of coding. Save the engineering hours for ideas that have already survived a reality check.

The riskiest assumptions: What and how to validate first

Not all assumptions are equal. Some are just preferences. Others, if wrong, will collapse your entire business model. The skill of early-stage validation is learning to tell the difference and attacking the most dangerous ones first.

Your riskiest assumptions typically fall into three categories:

- Customer need assumptions: Does the problem you’re solving actually exist in a painful, recurring way for your target user? People often acknowledge a problem exists without it being urgent enough to pay for a solution.

- Willingness to pay assumptions: Even if users have the problem, will they spend money to fix it? Free alternatives, switching costs, and budget constraints can kill a product that solves a real problem.

- Technical feasibility assumptions: Can you actually build what you’re promising at a price point and performance level that works for the business? This one is especially critical for non-technical founders who may not know what’s realistic.

Y Combinator advises founders to talk to at least 30 prospects before writing a single line of code. Thirty is not an arbitrary number. It’s the threshold at which patterns become statistically meaningful and you stop fooling yourself with cherry-picked conversations. Under 30 conversations, you’re still in confirmation bias territory.

One critical insight that surprises many first-time founders: having no direct competitors is not a good sign. It usually means there’s no real market, not that you’ve found a hidden opportunity. Competitive markets are hard, but they confirm demand exists. A market with zero competitors should trigger deep investigation, not celebration.

The practical methods for testing riskiest assumptions include:

- Structured customer interviews using the Mom Test framework (asking about behavior and past experiences, not opinions about your idea)

- Landing pages with clear value propositions and a measurable call to action, usually an email capture or pre-order button

- Paper MVPs or clickable prototypes built in Figma or similar tools, which let you test user flows without writing backend code

- Concierge MVPs, where you manually deliver the service your software would eventually automate, to confirm people will pay before you invest in automation

Using an idea validation checklist helps you stay systematic rather than reactive. Combine this with a solid MVP validation checklist to make sure you’re tracking the right metrics at each stage. Strong execution patterns from a full cycle development approach can help teams align testing and building into a coherent workflow.

Pro Tip: Talk to 30 real prospects before you open your laptop to code. Keep the conversations short, focused, and unbiased. Ask them to describe the last time they dealt with the problem you’re solving. Their stories are worth more than any market research report.

Validation never stops: Post-MVP risks and continuous learning

Here’s something most early-stage content won’t tell you: shipping your MVP is not the finish line for validation. It’s the beginning of a more expensive round of testing. 20% of Series B+ startups still fail due to product-market fit problems despite having early traction. Early traction can be misleading. Your first 100 users might be enthusiastic early adopters who are fundamentally different from the mainstream customer you’ll need to reach at scale.

Late-stage PMF failure happens in predictable patterns. A startup gets strong initial signups, raises a Series A, scales marketing spend, and then watches churn spike because the product was only really working for a narrow niche. By the time the data makes this clear, the company has hired a full team around the wrong product direction. Unwinding that is slow, expensive, and demoralizing.

Post-MVP validation checkpoints should be built into your growth process:

- Week 2 activation check: Are users completing the core action your product was designed for? If not, something is broken in onboarding or the value proposition.

- Day 30 retention review: Are users still active a month after signup? Retention at 30 days is one of the strongest early PMF signals you can measure.

- Cohort analysis by acquisition channel: Do users from different sources behave differently? This tells you which channels bring quality users versus noise.

- Net Promoter Score at 60 days: Ask users how likely they are to recommend your product. Low scores are a warning sign before churn becomes obvious.

- Quarterly assumption audit: Revisit the assumptions you validated pre-launch. Markets shift. Customer behavior evolves. What was true at launch may not be true six months later.

“Early traction is evidence that something is working. It is not evidence that the right thing is working at the right scale.”

Embedding a feedback loop into your growth strategy isn’t optional. Customer success calls, in-app surveys, churn interviews, and usage analytics all feed into your ongoing understanding of the validation process. Companies that treat post-launch validation as seriously as pre-launch validation scale with far more predictability. The frameworks for scaling that actually work are built on continuous learning, not a one-time product-market fit declaration.

What most guides miss about early validation: Lessons from repeated startup cycles

Most validation guides treat the process like a checklist. Talk to users: check. Build a landing page: check. Ship an MVP: check. But that framing is exactly what gets founders into trouble. Validation isn’t a series of tasks you complete and move on from. It’s a living system that runs parallel to everything else you’re doing.

The most common mistake I see from founders isn’t skipping validation entirely. It’s confusing activity with learning. You can have 50 customer conversations and walk away with nothing actionable if you’re asking the wrong questions or only talking to people who already like you. You can build a landing page and get 500 email signups that represent zero real purchase intent if your call to action doesn’t create any friction or commitment.

Strong signals look like: someone asking how to pay you before the product exists. A prospect introducing you to three colleagues unprompted. A customer saying they’d be genuinely upset if you shut the product down. Weak signals look like: “This is a cool idea.” “I’d probably use this.” “Send me more info.”

Humility is the most underrated validation skill. The founders who build the best products are usually the ones who go into every conversation expecting to be wrong and being genuinely curious about why. Understanding how MVPs validate startup ideas in practice means accepting that the goal isn’t to confirm your plan. It’s to find out as cheaply as possible where your plan is wrong.

Focus on signal, not noise. Ten prospects who would pay tomorrow beat a hundred who say “maybe someday.”

How we help founders validate early: Your next step

If you’ve read this far, you understand that validation is not something you can hand off to a to-do list. It requires technical judgment, product thinking, and the ability to translate real user feedback into build decisions fast.

At hanadkubat.com, I work directly with non-technical founders to build production-ready MVPs in 4 to 12 weeks, designed from the start to test your riskiest assumptions. There’s no agency overhead, no project manager in the middle, and no bloated scope. You get Fortune 500 engineering discipline applied at founder speed. Every recommendation I make is something I’ve tested on my own SaaS products first. If you’re ready to stop theorizing and start building smart, let’s talk about your next step.

Frequently asked questions

What is early validation and why is it important for startups?

Early validation means testing your core assumptions about the product, market, and customer before investing significant time or money. It prevents expensive mistakes by confirming real demand exists before you build anything, as the Build-Measure-Learn loop makes clear through smallest-possible experiments.

What are the top causes of startup failure?

The leading cause is poor product-market fit or no real market need, which accounts for 42 to 43% of startup failures in large post-mortem analyses, with capital running out as the downstream symptom rather than the root cause.

How do founders validate the riskiest assumptions?

Founders use structured customer interviews, landing page experiments, and lightweight MVPs to gather real feedback before committing to a full build. The smallest viable experiment that can answer your core question is always the right starting point.

Should validation continue after launching an MVP?

Absolutely. 20% of later-stage startups still fail from product-market fit issues despite early traction, which means post-launch validation checkpoints like retention analysis and cohort reviews are essential parts of any growth strategy.

Is having no competition a good sign for a SaaS startup?

No. Lack of direct competitors usually signals no proven market demand rather than a hidden opportunity. Founders should investigate deeper and validate customer urgency before treating an empty market as a green light.