TL;DR:

- An MVP is a simple, working product that delivers core value and enables fast learning.

- Founders should validate demand with cheap tests like landing pages or manual processes before coding.

- Success depends on clear validation, strong architecture, and continuous learning rather than speed alone.

Most founders think MVP means shipping something half-finished and hoping nobody notices. That’s exactly backwards. An MVP, or Minimum Viable Product, is the simplest version of your product that delivers real value to real users — and it’s one of the most strategic moves an early-stage founder can make. The problem isn’t that founders build too little. It’s that they build too much, too soon, without testing whether anyone actually wants what they’re making. This guide cuts through the noise and gives you a clear, practical framework for understanding, building, and measuring an MVP — no technical co-founder required.

Table of Contents

- Understanding MVP: Definition and core principles

- MVP workflow for non-technical founders: From idea to launch

- Common MVP pitfalls and how to avoid them

- How to measure MVP success and what to do next

- Why fast launch isn’t always better: Our expert MVP perspective

- Ready to build your MVP? Get expert guidance

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| MVP is not a prototype | A Minimal Viable Product is a real product solving a key problem—not a rough draft or demo. |

| Validate before coding | Start with the cheapest tests and manual or no-code solutions to prove real demand fast. |

| Quality foundations matter | Production-grade basics, modularity, and early feedback will save you from costly rewrites later. |

| Watch out for common traps | Most MVPs fail due to overbuilding, missed validation, or neglecting fundamentals—not lack of features. |

Understanding MVP: Definition and core principles

The term “Minimum Viable Product” was popularized by Eric Ries in The Lean Startup, but it’s been misused ever since. Founders hear “minimum” and think it means cutting corners. It doesn’t. The word “viable” is doing the heavy lifting here.

An MVP is the simplest version of a product that delivers core value to early users for validation and feedback — with the least amount of effort wasted on features nobody asked for.

That distinction matters. A prototype is a mockup. A beta is a feature-complete product being tested for bugs. An MVP is neither. It’s a real, working product that solves one specific problem for a clearly defined group of users. It’s not a throwaway. It’s a learning tool.

Here are the three core principles every MVP must follow:

- Solves one specific problem. Not five. Not three. One. If you can’t describe your MVP’s value in a single sentence, you’ve already overbuilt it.

- Delivers genuine core value. Users should walk away feeling like the product actually helped them — not like they were guinea pigs for a broken experiment.

- Enables fast, real feedback. The whole point is to learn quickly. If your MVP doesn’t generate actionable data from real users, it’s not doing its job.

Quality still matters here. A buggy, confusing product won’t give you useful feedback — it’ll just frustrate people. The goal is minimal scope, not minimal quality. When you’re validating startup ideas, the discipline is in deciding what not to build, not in building things poorly.

Think of it like a restaurant testing a new dish. They don’t build a whole new kitchen. They make the dish, serve it to a table, and watch what happens. That’s MVP thinking.

MVP workflow for non-technical founders: From idea to launch

You don’t need to write a single line of code to start building your MVP. That surprises a lot of founders, but it’s true. The fastest path to validation starts with cheap tests, not code.

Here’s a simple weekly workflow to get from idea to your first 10 users:

- Week 1: Define your hypothesis. Who is your user? What problem are you solving? What do you believe to be true that you need to test? Write it down in one sentence.

- Week 2: Map the cheapest test. Before you build anything, identify the fastest way to find out if people want this. A landing page, a manual process, a fake button — pick the lowest-effort option.

- Week 3-4: Run your test. Execute the test with real people. Talk to them. Watch what they do. Collect data.

- Week 5-6: Lock scope and build only if demand is proven. If your test shows real demand, build the core feature. Lock the spec. Don’t add extras. Launch to your first 10 users.

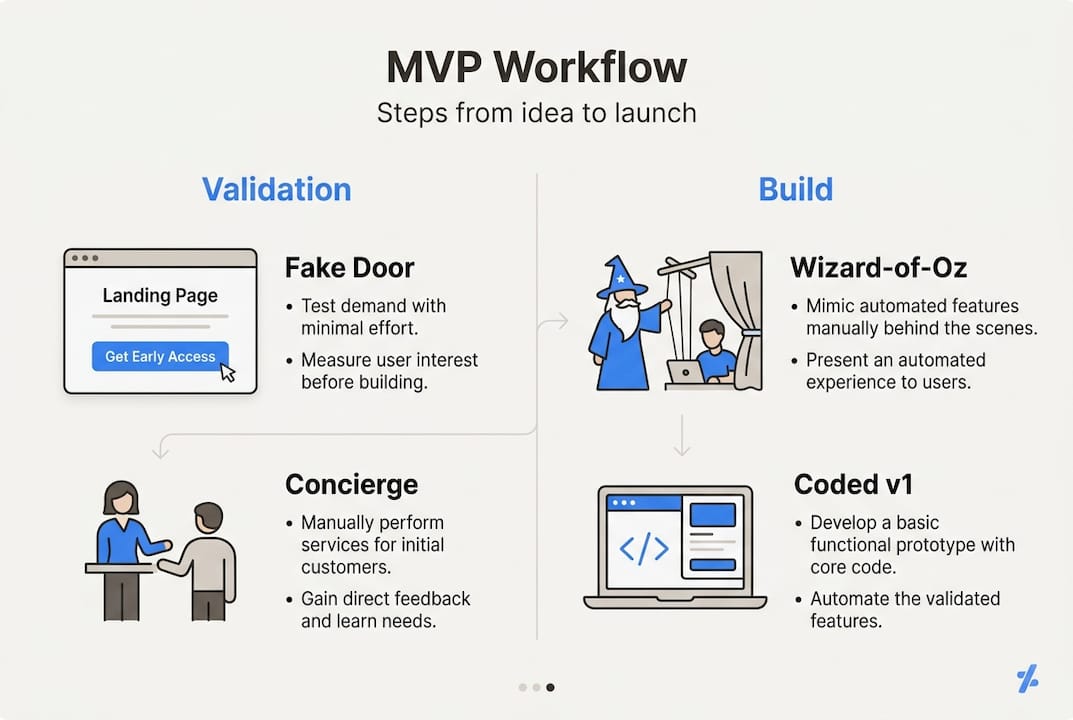

Not all tests look the same. Here’s a breakdown of the main options:

| Test type | What it looks like | When to use it |

|---|---|---|

| Fake door | Landing page with a sign-up button | Testing demand before building anything |

| Concierge/manual | You do the service by hand | Validating the value before automating |

| Wizard of Oz | Looks automated, but you’re behind the scenes | Testing UX before writing backend code |

| Coded v1 | A real, minimal product | Only after the above tests show clear demand |

Pro Tip: Start at the top of that table and work your way down. Most founders jump straight to “coded v1” and waste months. A landing page test takes hours. A concierge test takes days. Both give you real signal.

If you want to build an MVP fast without coding, the concierge and Wizard-of-Oz methods are your best friends. And if you’re wondering how to launch an MVP without a technical co-founder, these manual approaches are exactly where you start.

Manual steps aren’t cheating. They’re the smartest thing you can do before spending money on development.

Common MVP pitfalls and how to avoid them

Even with a solid plan, founders make the same mistakes over and over. Knowing them in advance is half the battle.

A study of 125 real MVP projects found that 68% fail after launch — not because the idea was bad, but because of fragile architecture, poor data assumptions, and no single owner accountable for outcomes. That’s a systems problem, not a product problem.

Here are the most common pitfalls:

- Overbuilding. Adding features “just in case” before you’ve validated anything. This is the number one killer.

- Scope creep. The spec grows every week because you keep thinking of new things to add. Lock it and protect it.

- Skipping cheap tests. Jumping straight to code without running a fake-door or concierge test first.

- Quality shortcuts. Cutting corners on fundamentals like error handling, logging, or data structure. These create technical debt that’s expensive to fix later.

- No single owner. If everyone is responsible, no one is. Assign one person to own the MVP outcome.

Here’s how the top failure causes map to prevention strategies:

| Failure cause | Prevention |

|---|---|

| Fragile architecture | Build modular, observable foundations from day one |

| Poor data assumptions | Test assumptions with real users before automating |

| No single owner | Assign one accountable decision-maker |

| Scope creep | Lock the spec before development starts |

| Skipped validation | Run at least one cheap test before writing code |

Pro Tip: Even in a minimal build, invest in observability. That means logging, error tracking, and basic analytics. You can’t learn from data you’re not collecting. “Move fast” doesn’t mean “ignore your foundations.”

For a deeper look at avoiding these traps, the common MVP pitfalls guide covers them in detail. And if you’re unsure what to include, the MVP features every startup founder needs is a useful reference.

How to measure MVP success and what to do next

Once your MVP is live, your job shifts from building to learning. Most founders don’t know what “success” actually looks like at this stage, so they either declare victory too early or panic unnecessarily.

Two numbers matter most:

- The “very disappointed” test. Survey your early users: “How would you feel if you could no longer use this product?” If 40% or more say “very disappointed”, you have strong product-market fit signal. Below 40%? You need to learn more before scaling.

- Retention above 20%. Are users coming back? Even modest retention at this stage is meaningful. If people use it once and disappear, the core value isn’t landing.

Here’s a simple three-step feedback loop to run after launch:

- Measure. Track the two numbers above. Also note which features users actually use versus which ones they ignore.

- Learn. Talk to your users directly. Five conversations will tell you more than 500 data points. Ask what’s missing, what’s confusing, and what they’d pay for.

- Adjust. Based on what you learn, decide: iterate on the current direction, pivot to a different user segment or problem, or sunset the product if the signal is consistently weak.

The hardest part is avoiding the sunk cost trap. If your MVP isn’t working after two or three honest iterations, that’s data too. A failed MVP isn’t a failure — it’s a result. The MVP validation checklist can help you run this process systematically, and MVP best practices covers what the iteration phase should look like in practice.

The goal isn’t to fall in love with your MVP. It’s to learn fast enough to build something people actually want.

Why fast launch isn’t always better: Our expert MVP perspective

Here’s something most MVP guides won’t tell you: speed can be the enemy of learning. In 2026, AI tools and no-code platforms make it easier than ever to ship something quickly. That’s mostly good news. But it also creates a new trap — founders launch faster than they can process feedback, and they confuse activity with progress.

The “launch fast” mantra is being challenged in competitive markets where users expect quality from day one. A product that crashes, loses data, or feels unfinished doesn’t just fail to validate — it actively destroys trust you’ll never get back.

What I’ve seen across dozens of builds: the founders who learn fastest aren’t the ones who ship the quickest. They’re the ones who ship deliberately. They define what they’re testing before they build. They set clear success criteria. They treat their MVP like a scientific experiment, not a race.

The Lean Startup’s build-measure-learn loop is still the right framework. But “build” doesn’t mean “ship anything.” It means ship something production-grade, modular, and observable — even if it only does one thing. That’s the standard I hold every MVP to, and it’s the one that actually produces useful learning.

Ready to build your MVP? Get expert guidance

If this guide helped you see your MVP differently, the next step is turning that clarity into action. Building an MVP that’s focused, production-ready, and actually teaches you something is harder than it sounds — especially without a technical background.

At hanadkubat.com, I work directly with non-technical founders to build MVPs in 4 to 12 weeks. No agency overhead, no project manager in the middle, no equity required. You get Fortune 500 engineering discipline applied at founder speed. If you want to understand how design decisions affect your product’s success, the role of UX in MVP development is worth reading before your first build. When you’re ready to move, reach out directly.

Frequently asked questions

Does my MVP need to include all features I envision?

No. Your MVP should include only the single core feature that solves one key user problem. Everything else is a distraction until you’ve validated core product value.

How fast should I try to launch my MVP?

Aim to launch within 3 to 6 weeks, but only after running at least one cheap validation test first. Speed without validation is just expensive guessing.

What is the most common reason MVPs fail after launch?

Most MVPs fail because of fragile architecture and skipped validation, not a lack of features. Scope creep and no production-ready foundation are close behind.

How do I know if my MVP is successful?

If 40% of users say they’d be very disappointed if your product disappeared and retention stays above 20%, your MVP is validated and ready for the next phase.